AMD's Data Center Reaccelerates: $5.8B, +57%, and a $11.2B Q2 Guide That Changes the Model

Q1 beats on every line, data center grew sequentially despite seasonal headwinds, Meta's 6GW MI450 deal moves forward, and the CDNA 5 roadmap on TSMC N2 is AMD's most ambitious chip ever

Executive Summary — Q1 2026 Reported May 5, 2026

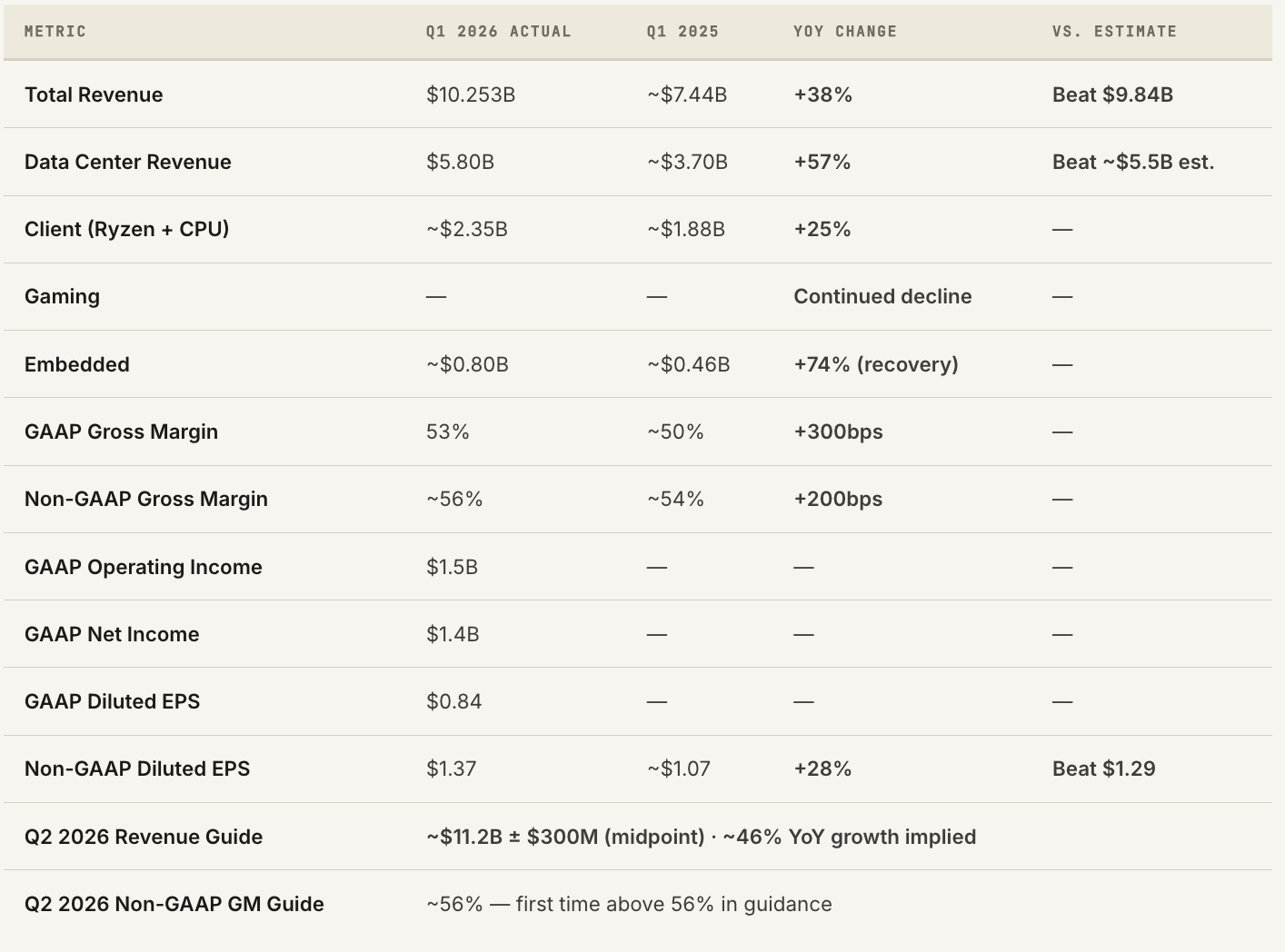

AMD delivered one of its cleanest quarters in recent memory. Revenue of $10.25 billion beat the $9.84 billion estimate by $410 million, up 38% year-over-year. Data center revenue hit $5.8 billion — up 57% year-over-year and growing sequentially despite Q1 being the seasonally softer quarter. Non-GAAP EPS of $1.37 cleared the $1.29 consensus by $0.08. The Q2 guidance midpoint of $11.2 billion implies another 46% year-over-year growth and sequential acceleration — which is not what bears modeling a post-MI300 plateau expected to see.

The number that changes the thesis is data center growing quarter-over-quarter in Q1. Every prior cycle of GPU revenue (Nvidia included) showed seasonal softness in Q1 as hyperscalers digested prior-year deployments. AMD’s $5.8B vs. Q4 2025’s $5.4B breaks that pattern and suggests the Meta 6GW MI450 deployment — combined with expanding EPYC server CPU dominance — is creating a demand baseline that doesn’t experience the traditional inventory digestion cycles. The CDNA roadmap from MI350 (CDNA 4, late 2026) to MI400 (CDNA 5, TSMC N2, 2027) remains the most aggressive silicon development schedule AMD has ever attempted. If Lisa Su executes, AMD exits 2027 as a genuine data center platform company rather than an opportunistic Nvidia alternative.

01

How AMD Got Here: The Three-Year Arc That Wall Street Keeps Underestimating

Three years ago, AMD’s data center GPU revenue was effectively zero. The MI250 existed as a research curiosity deployed at a handful of national laboratories. Nvidia’s H100 — announced in March 2022 — had a two-year backlog and a CUDA ecosystem so entrenched that most hyperscaler AI infrastructure teams didn’t even benchmark alternatives. AMD’s RDNA and CDNA architectures were competitive in FLOPs-per-chip but hobbled by a ROCm software stack that lacked the library breadth, the debugging tools, and the third-party support that made CUDA feel like a natural environment for AI researchers.

The MI300X changed the equation in late 2023. It was not the chip that overturned Nvidia — it was the chip that proved AMD could design a competitive GPU for AI inference workloads. The MI300X’s HBM3 memory bandwidth (5.2 TB/s) and its 192GB of on-chip memory made it genuinely superior to the H100 for large-model inference tasks where moving data was the bottleneck rather than compute. Microsoft, Meta, and Oracle began qualifying it. AMD’s data center GPU revenue went from near zero in Q3 2023 to $3.5 billion in Q3 2024 to $5.4 billion in Q4 2025 to $5.8 billion today. That is one of the fastest revenue ramps in semiconductor history for a product category starting from zero.

The Q1 2026 results are important not just for their headline numbers but for what they say about the structural sustainability of that ramp. AMD is no longer riding a single MI300X wave — the product is now an architecture. The MI325X succeeded MI300X with better HBM3E memory. The MI350 (CDNA 4) is qualifying at hyperscalers now for late-2026 deployment. The MI400 (CDNA 5) on TSMC’s N2 node — the first AMD AI GPU on sub-3nm silicon — is AMD’s answer to Nvidia’s Blackwell NVL72. And the Meta MI450 deal for 6 gigawatts of custom Instinct GPUs confirms that at least one of the world’s largest AI compute buyers has made AMD a strategic partner rather than a price-check vendor.

Note on Issue 153: Our prior AMD deep dive (Issue 153, published before Q1 2026 reporting) previewed Q1 at $9.8B revenue and $5.5B data center on management guidance. Actual Q1 came in at $10.25B and $5.8B — a beat on both lines with data center growing sequentially in a typically soft quarter. The Q2 guide of $11.2B is $1.0B above what we modeled as the base case in Issue 153. This article supersedes Issue 153’s forward estimates.

02

Q1 2026 Results — Every Line a Beat

The sequential data center growth from $5.4B (Q4 2025) to $5.8B (Q1 2026) deserves particular attention. Historically, Q1 is the weakest quarter for semiconductor revenue — enterprise IT buyers close Q4 budgets, hyperscalers slow deployments, and the industry typically sees 5–10% sequential declines in the January–March quarter. AMD’s data center growing $400M sequentially in Q1 is a direct signal that demand from the Meta 6GW deal, expanding EPYC server CPU penetration, and MI325X production ramp are creating a consumption floor that doesn’t bend to seasonal patterns. That is exactly what it looked like when Nvidia began its current dominance cycle in 2023.

03

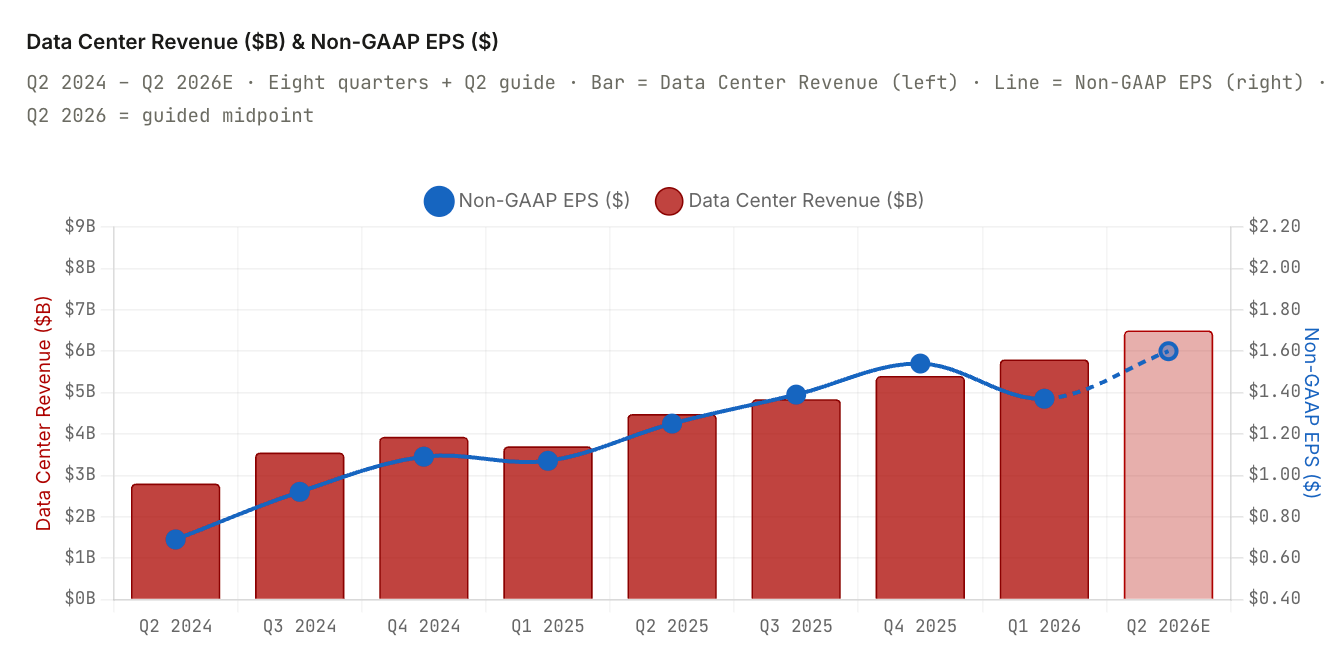

The Data Center Climb — Eight Quarters of Evidence

The chart below plots AMD’s data center revenue trajectory against non-GAAP EPS over the past eight quarters. The inflection that began with MI300X in late 2023 has not plateaued — it has reaccelerated. The Q2 2026 guidance bar (shown lighter) would represent AMD’s highest-ever single-quarter revenue if achieved at the midpoint.

04

The Meta 6GW Deal — What It Actually Means

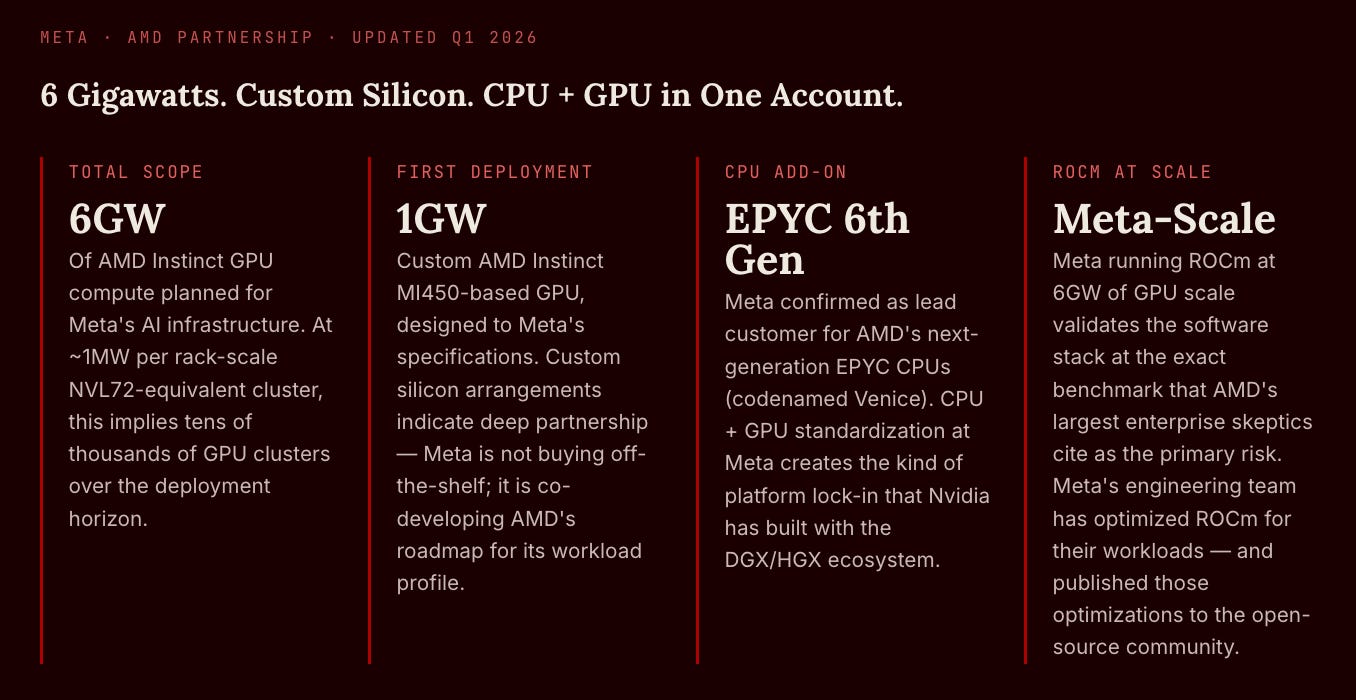

The Meta partnership, first announced with AMD’s Q4 2025 results, was updated today with specifics that change the scale calculus materially. Meta and AMD confirmed a joint deployment plan targeting up to 6 gigawatts of AMD Instinct GPU compute, with the first 1 gigawatt to be powered by a custom AMD Instinct MI450-based GPU designed specifically for Meta’s AI infrastructure requirements. Meta also confirmed it will be a lead customer for AMD’s upcoming 6th Generation EPYC CPUs.

The strategic significance of the Meta deal extends beyond its revenue impact. When the world’s largest consumer AI company (Meta runs Llama inference at a scale no other non-cloud company approaches) standardizes on AMD GPU hardware and co-develops custom silicon, it sends a signal to every other hyperscaler’s procurement team. Oracle, Microsoft, and Google are all now evaluating AMD at higher priority than they were 18 months ago — not because AMD is cheaper (the MI400 will not be meaningfully cheaper than Nvidia’s B200) but because diversification from Nvidia is now a strategic imperative, and Meta’s deployment proves AMD can perform at scale.

05

The Four Business Lines Inside AMD

Franchise 01

Data Center — GPU + CPU

The growth engine. $5.8B in Q1, +57% year-over-year, growing sequentially in a soft quarter. The revenue mix is increasingly dual-stream: Instinct GPUs (MI300X → MI325X → MI350 ramping) plus EPYC server CPUs (Genoa → Turin → Venice upcoming). EPYC is now above 30% of the 2-socket server market globally, a near-complete reversal from the 0–2% share AMD held as recently as 2018. The combined CPU + GPU story creates a full-rack value proposition (AMD CPUs hosting AMD GPU compute in optimized configurations) that is the only credible alternative to the Nvidia DGX/HGX + Intel Xeon stack that has dominated data center procurement for a decade.

Q1: $5.8B (+57% YoY) · Sequential ↑ from Q4 $5.4B · EPYC: 30%+ 2-socket share

Franchise 02

Client — Ryzen CPU

The reliable compounding business. Client revenue of approximately $2.35B grew 25% year-over-year on continued Ryzen AI PC momentum and share gains in the consumer and commercial notebook markets. AMD’s Ryzen AI processors — which include dedicated NPU (neural processing unit) hardware for on-device AI workloads — are positioned for a Windows AI PC refresh cycle that Microsoft and OEM partners have been promoting since the Copilot+ PC launch. The client segment is not a growth story at AMD’s current scale, but it generates stable, high-margin revenue that provides operating leverage on fixed R&D costs.

Q1: ~$2.35B (+25% YoY) · Ryzen AI PCs gaining OEM design wins

Franchise 03

Gaming — Semi-Custom + dGPU

The cyclical declining segment. Gaming revenue continues its multi-quarter normalization as PlayStation 5 and Xbox Series X semi-custom chip demand decelerates mid-console cycle and the discrete GPU market remains highly competitive against Nvidia’s GeForce RTX 5000 series. Lisa Su has consistently signaled that gaming is a secondary priority relative to data center R&D investment — AMD’s RDNA 4 for consumer GPUs shares architectural DNA with CDNA but has received less engineering investment than the data center roadmap. This is the right capital allocation decision but creates ongoing gaming share risk against a competitor (Nvidia) that is similarly deprioritizing gaming relative to AI infrastructure.

Q1: Declined YoY · Console semi-custom normalizing mid-cycle · RDNA 4 competitive but secondary focus

Franchise 04

Embedded — Xilinx FPGAs

The recovery story. Embedded revenue grew approximately 74% year-over-year in Q1 — the most aggressive recovery quarter yet from the inventory digestion that plagued the segment throughout 2023–2024. Xilinx FPGAs and adaptive SoCs serve industrial automation, automotive, aerospace, and communications infrastructure markets where replacement cycles are long and qualification processes are exhaustive. The recovery is structural (inventory was burned; OEMs are reordering), not cyclical. The question is whether embedded can approach pre-digestion revenue levels ($1.5B+ quarterly) or whether some permanent share was lost to Intel Altera (which also suffered) or custom ASIC alternatives.

Q1: ~$0.80B (+74% YoY) · Recovery continuing · Pre-digestion peak: ~$1.5B/quarter

06