Supermicro: Integration Boom or Temporary Peak?

Is Supermicro a Durable AI Infrastructure Winner — or a Temporary Beneficiary of Scarcity? How Much of Supermicro’s Growth Is Structural, and How Much Is Timing?

Disclaimer: This report is for informational purposes only and does not constitute investment advice, a recommendation, or a solicitation to buy or sell any security. All data is sourced from public filings, earnings releases, and third-party research

Executive Summary

A $22 Billion Revenue Machine With 9% Gross Margins

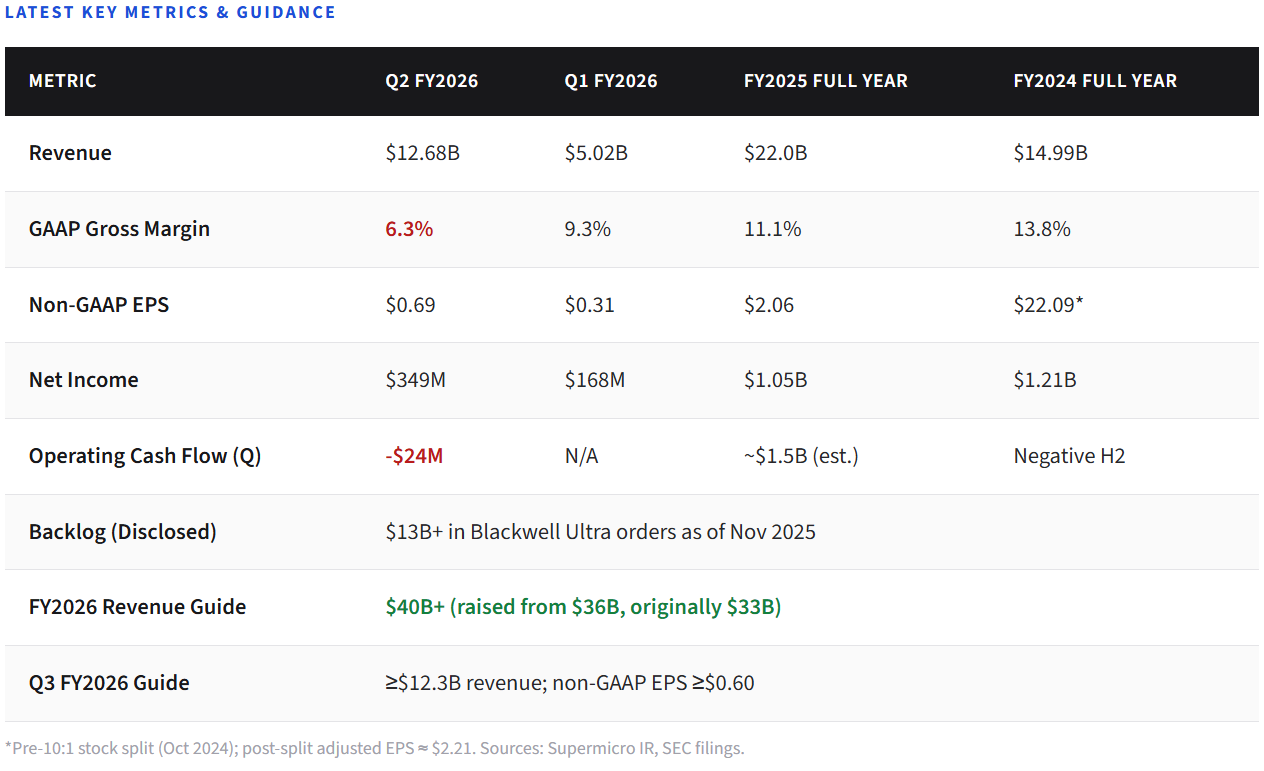

Super Micro Computer sits at the most contested intersection in technology: the layer between semiconductor innovation and deployed AI compute. In fiscal year 2025, the company grew revenue 47% to $22 billion and became the fastest-scaling AI server vendor in the world. In Q2 FY2026 (December 2025), revenue exploded to $12.7 billion in a single quarter — a 123% year-over-year surge driven by massive Blackwell GPU deployments for customers including xAI.

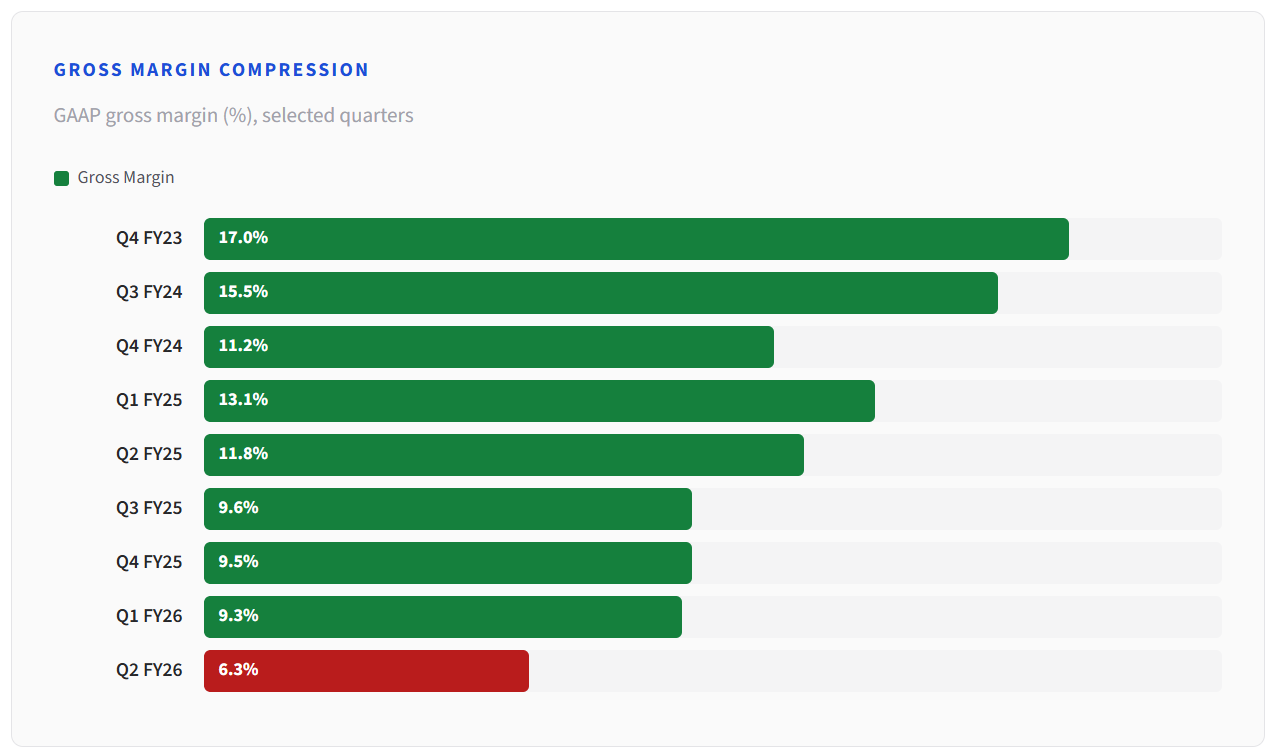

Yet beneath the headline numbers lies a harder question. GAAP gross margins have fallen from 18% in FY2023 to 6.3% in Q2 FY2026. Operating cash flow turned negative in the first half of FY2026. The company is trading margin for market share at the most aggressive point in the AI infrastructure buildout. With $13 billion+ in Blackwell Ultra backlog and FY2026 guidance raised to $40 billion+, the revenue trajectory is extraordinary — but whether this represents durable strategic positioning or a temporary, margin-compressing surge in a cyclical hardware market is the central question investors must resolve.

This report builds both sides of that case, examines the financial quality in detail, and offers a framework for monitoring the answer as it unfolds.

Key Takeaways

01

Revenue scale is real and accelerating. FY2025 delivered $22B (up 47%). Q2 FY2026 alone hit $12.7B. Full-year FY2026 guidance was raised to $40B+, implying roughly 80% growth.

02

Margins are compressing, not expanding. GAAP gross margin fell from 15.5% in Q3 FY2024 to 6.3% in Q2 FY2026. This trajectory reflects competitive pricing, ramp costs, and the economics of GPU-dense system assembly.

03

DCBBS is the strategic bet. Supermicro’s Data Center Building Block Solutions aim to make it a one-stop-shop for complete AI data center buildouts — compute, cooling, power, networking — potentially upgrading its role from assembler to infrastructure general contractor.

04

Competition is intensifying, not receding. Dell, HPE, Lenovo, and ODMs like Foxconn and Pegatron are all scaling AI server operations. Gross margins below 20% industry-wide suggest structural pricing pressure.

05

The central question is margin durability. Revenue growth in hardware assembly does not automatically translate to durable economic value. Whether DCBBS and liquid cooling shift the margin trajectory will define SMCI’s investment case.

Section I

Why Supermicro Matters Now

The AI infrastructure buildout is the largest coordinated capital expenditure wave in enterprise technology history. Hyperscalers, sovereign AI programs, and “neocloud” operators like CoreWeave and xAI are deploying GPU clusters at a pace that strains every link in the supply chain. In this environment, the ability to integrate semiconductor components into deployable systems quickly has become a strategic chokepoint.

Supermicro has emerged as one of the primary system-layer beneficiaries. The company is not a chip designer. It does not own the GPU (Nvidia), the memory (SK Hynix, Micron), or the networking fabric (Broadcom, Arista). What it does — and does faster than most competitors — is assemble and integrate those components into rack-scale, liquid-cooled compute systems that customers can deploy. In a market where time-to-deployment is measured in weeks rather than quarters, that speed advantage has proven economically significant.

But speed-to-market and strategic moat are not the same thing. Supermicro matters now because it sits at the busiest intersection in tech infrastructure. Whether it still matters in five years depends on whether the integration layer it occupies becomes structurally valuable — or whether it remains a high-velocity, low-margin assembly operation.

Section II

What the Latest Results Actually Show

Supermicro’s most recent reported quarter is Q2 FY2026 (December 2025), which revealed a dramatic revenue acceleration alongside continued margin compression. Revenue surged to $12.68 billion, exceeding the $10.3 billion consensus by 23% and representing a 123% year-over-year increase. The company also reported Q4 FY2025 and Q1 FY2026 results that help frame the trajectory.

The revenue acceleration was driven by volume deployments of Nvidia GB300 NVL72 and AMD MI355 platforms, with xAI’s Colossus 2 cluster being a notable customer engagement. Management disclosed more than $13 billion in Blackwell Ultra backlog and raised FY2026 revenue guidance from $36 billion to $40 billion+.

However, the profitability picture tells a different story. GAAP gross margin fell to 6.3% in Q2 FY2026, down from 11.8% in the year-ago quarter. Operating cash flow was negative in the first half of FY2026, and total liabilities grew significantly. The company is clearly prioritizing volume and market share over near-term profitability — a rational strategy during a buildout boom, but one that demands margin recovery to justify the business model long-term.

Section III

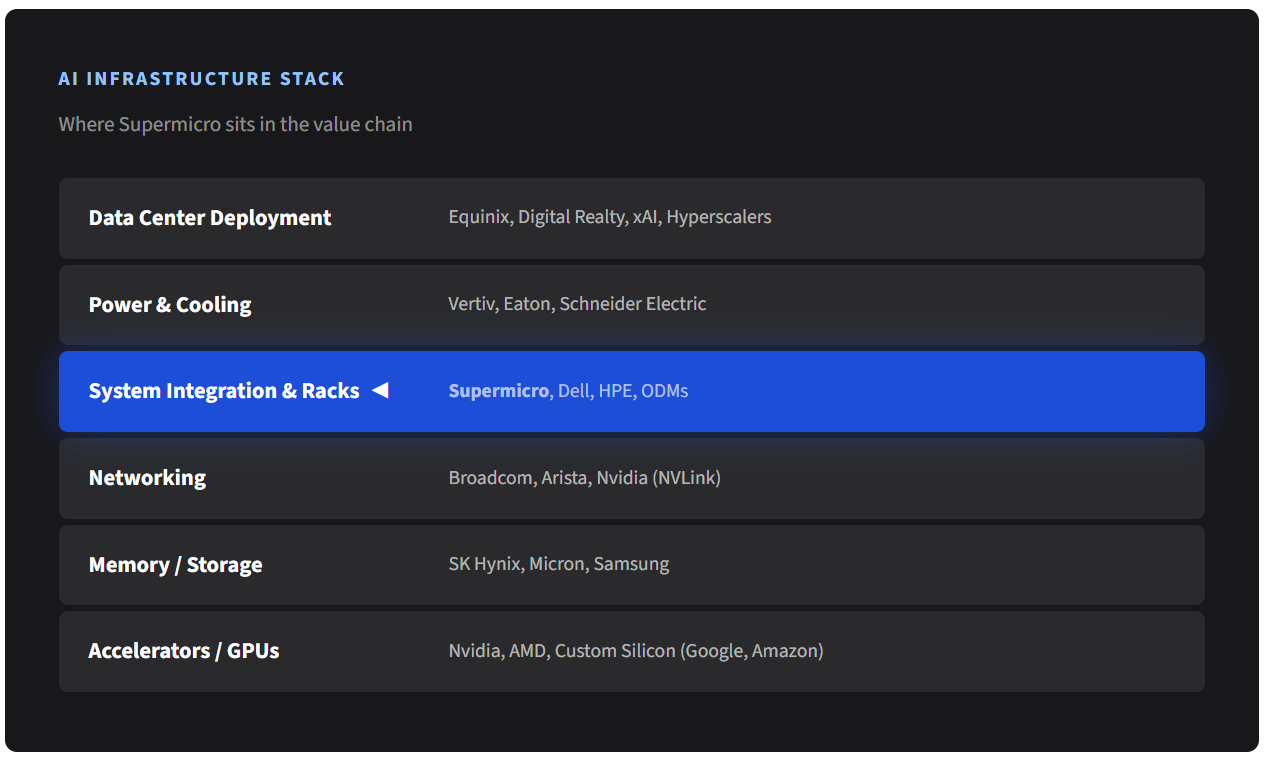

Supermicro’s Place in the AI Infrastructure Stack

Understanding Supermicro requires understanding what it is — and what it is not. The company is not a semiconductor company. It does not design GPUs, CPUs, or memory chips. It is not a cloud platform operator. It does not generate recurring software revenue. What Supermicro does is operate at the system integration layer: taking accelerators from Nvidia and AMD, memory from SK Hynix and Micron, networking components from Broadcom, and assembling them into deployable rack-scale systems with custom thermal, power, and mechanical design.

This layer matters more than it once did. Traditional enterprise servers were relatively simple: a motherboard, CPUs, RAM, storage, in a standardized 1U or 2U enclosure. AI servers are different. A single Nvidia GB300 NVL72 rack contains 72 GPUs and 36 Grace CPUs, requires liquid cooling capable of dissipating 150kW+ per rack, needs high-speed NVLink and InfiniBand interconnects, and must be validated as a complete system before deployment. The integration complexity has risen by an order of magnitude.

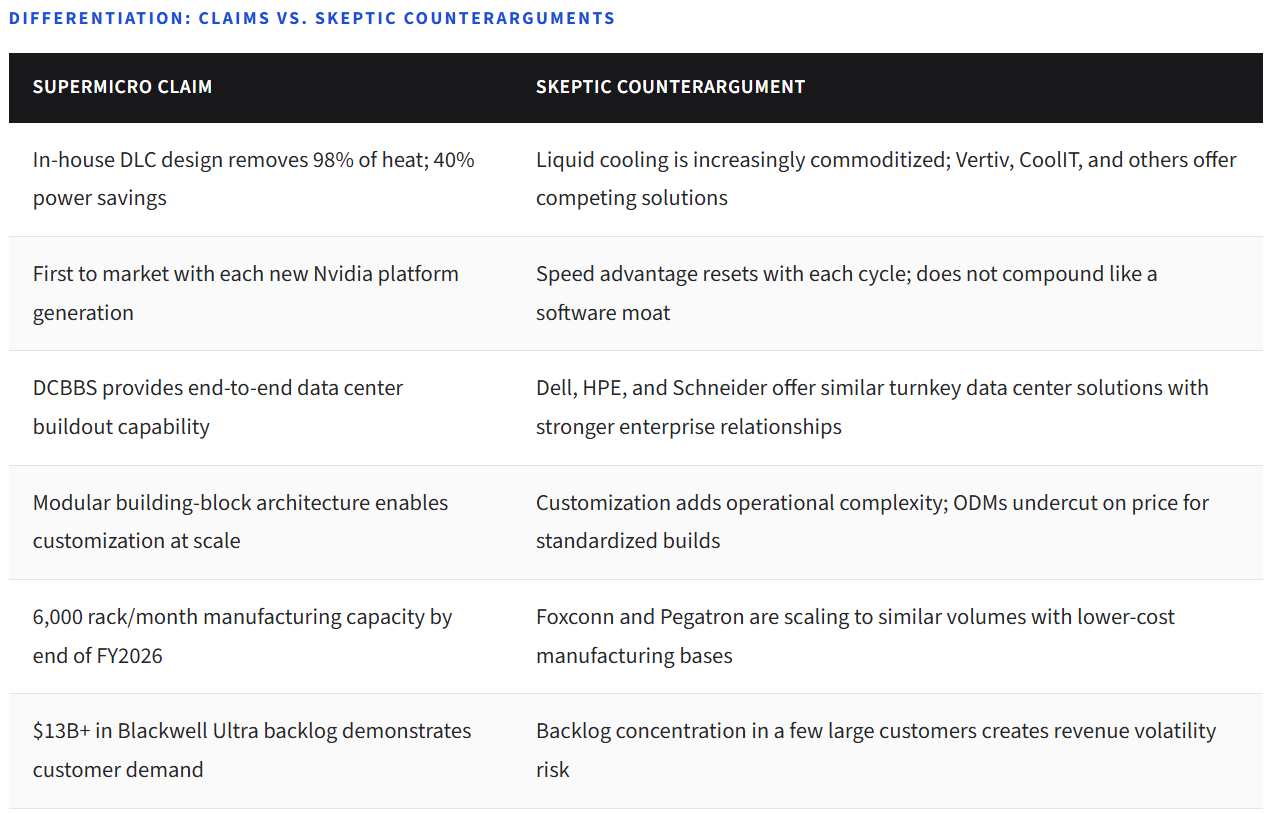

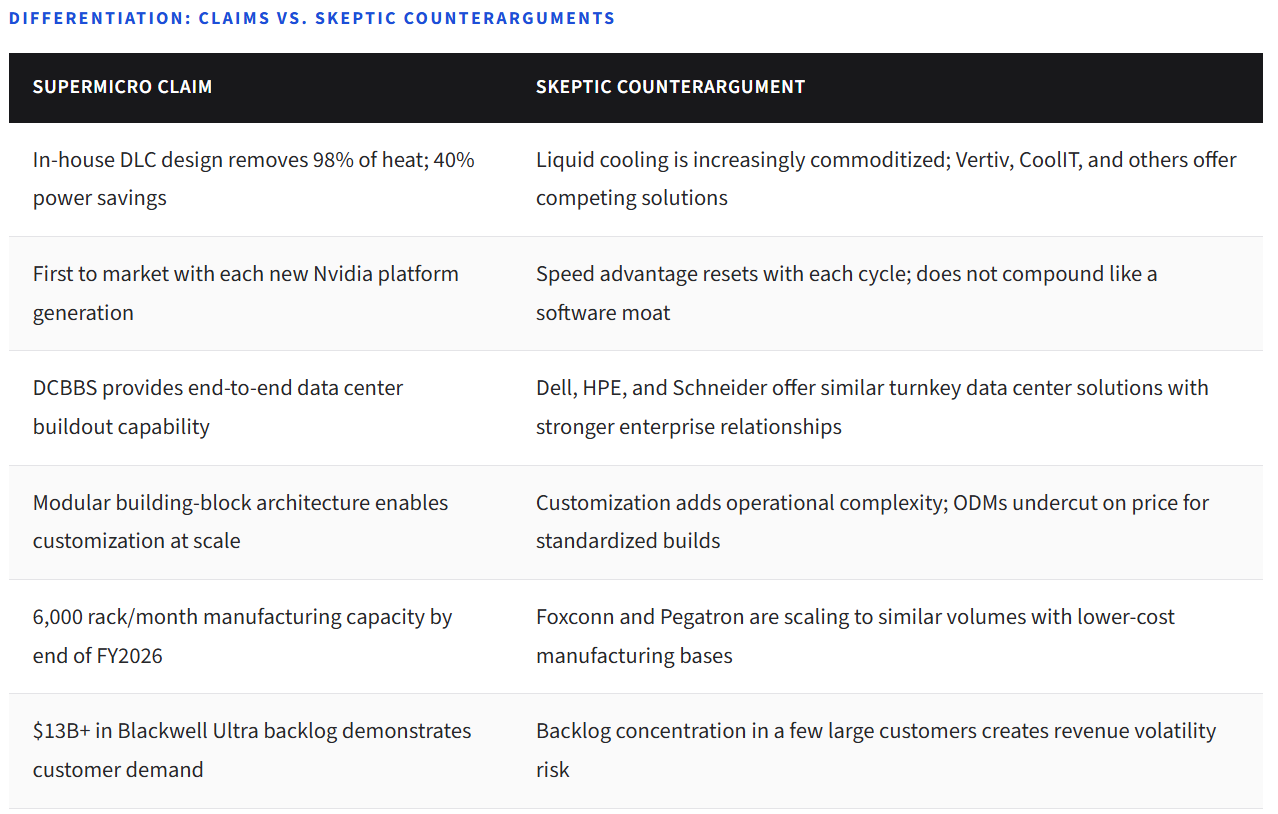

Supermicro’s argument is that this complexity makes the integrator role more strategically valuable. Critics counter that integrating third-party components, however complex, still results in thin margins and limited pricing power. Both sides have evidence.

Section IV

How Supermicro Makes Money

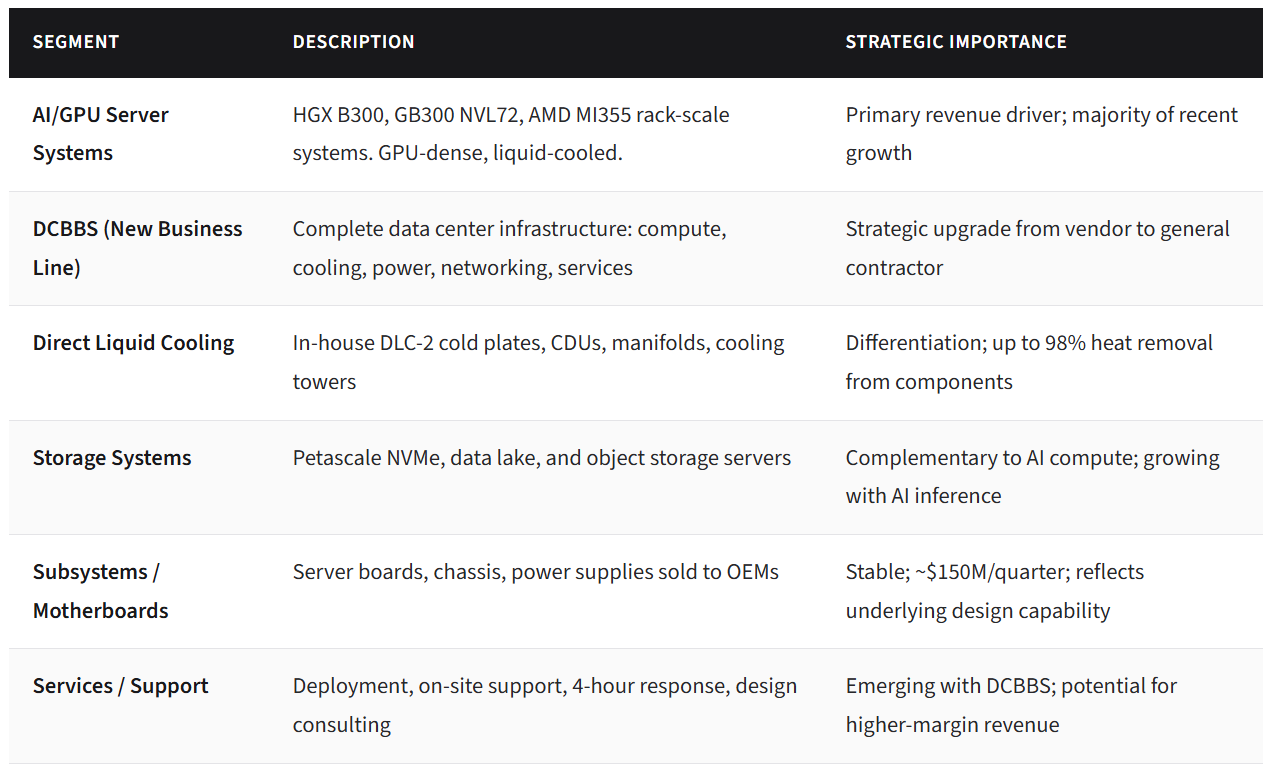

Supermicro generates revenue across several product categories, though AI-driven server and rack-scale systems now dominate. The company designs and manufactures its own motherboards, chassis, and power supplies in-house (San Jose, Taiwan, Netherlands), which gives it tighter control over system design than a pure assembler. It then integrates GPUs, CPUs, memory, and networking from partners into complete systems.

The DCBBS initiative represents a strategic evolution from server vendor to data center infrastructure contractor. Under DCBBS, Supermicro packages compute, storage, liquid cooling infrastructure, power distribution, networking, cabling, management software, and deployment services into integrated buildout solutions. The company claims this approach can reduce data center time-to-online to as little as three months.

Why “Building the Box” Can Still Be Strategically Valuable

In a rapidly evolving compute market, the ability to design, validate, and ship systems on the latest silicon faster than competitors creates real — if potentially temporary — economic value. Supermicro was among the first to ship Nvidia Blackwell-based systems at volume, and it is already positioning for Vera Rubin and Rubin platforms. This first-mover advantage in system design allows Supermicro to capture demand during the period of highest urgency and lowest price sensitivity.

The question is whether this speed advantage compounds or resets with each GPU generation.

Section V

Why AI Changes the Server Business

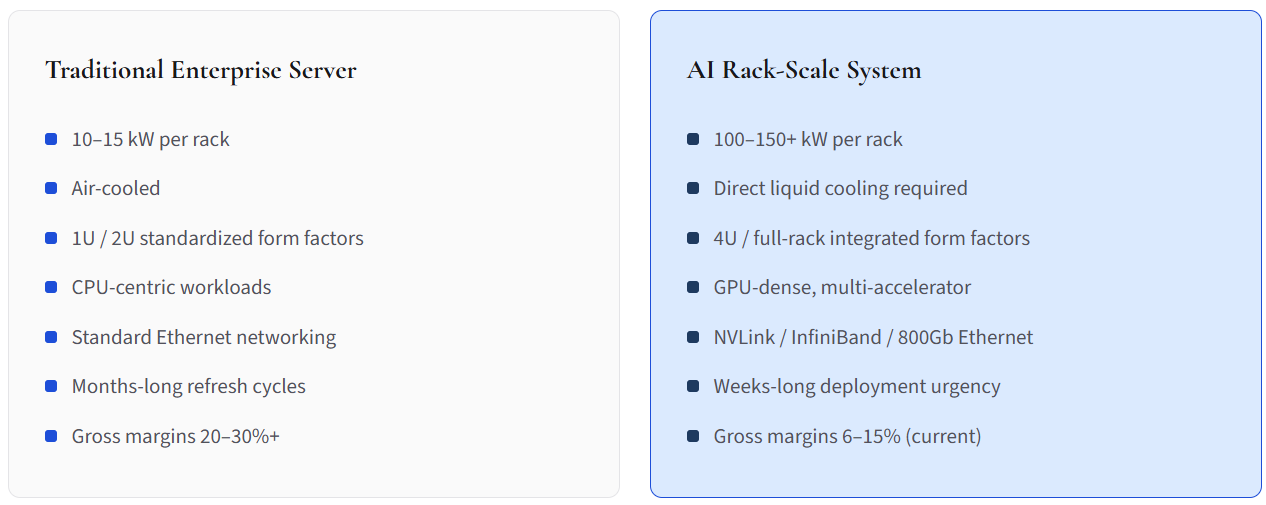

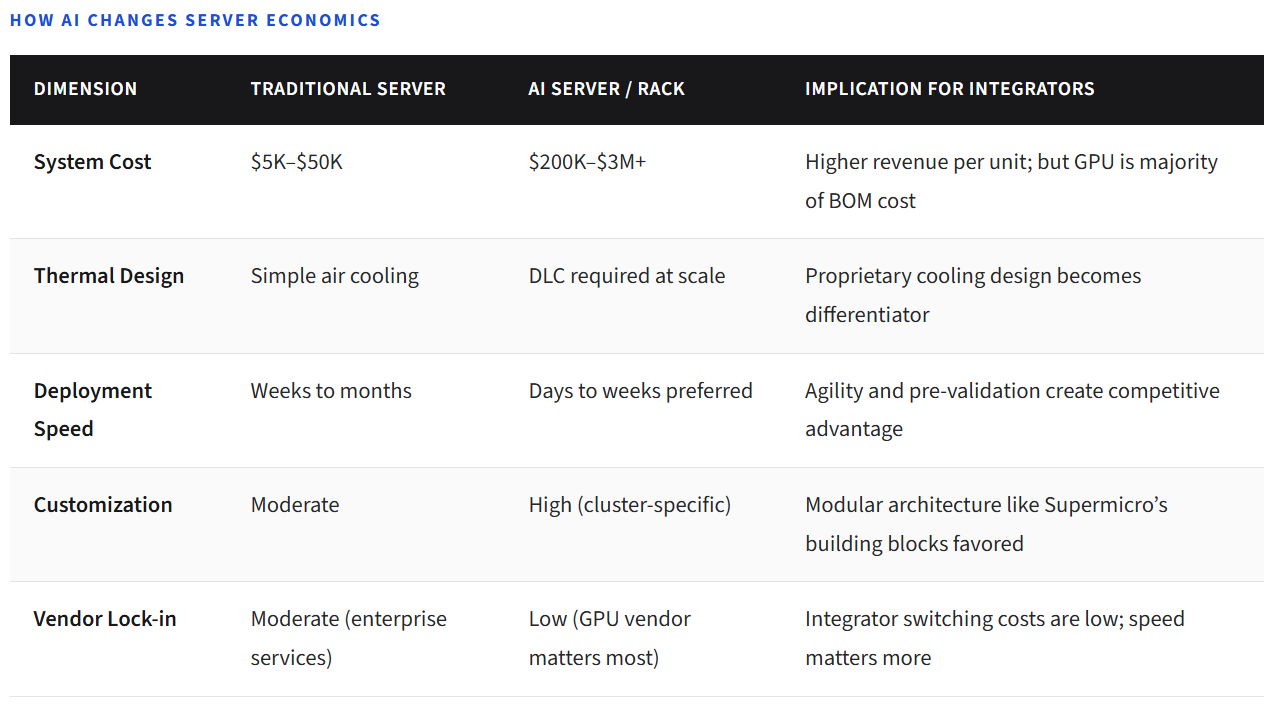

The transition from traditional enterprise servers to AI-optimized infrastructure represents a fundamental shift in system design complexity, power density, thermal management, and deployment velocity. This is not an incremental change — it alters the economics of the entire integration layer.

A traditional enterprise server rack might consume 10–15 kW and use air cooling. An AI training rack based on Nvidia’s GB300 NVL72 platform can draw 150kW+ and requires direct liquid cooling. The networking requirements shift from standard Ethernet to NVLink/NVSwitch interconnects with InfiniBand or high-speed Ethernet fabrics. Storage must support the throughput demands of multi-trillion-parameter model training. Every aspect of the system — mechanical, thermal, electrical, and logical — must be co-designed.

These shifts may favor agile, purpose-built integrators over slower-moving legacy enterprise vendors. But they also attract new competitors: Foxconn, Pegatron, Sanmina, and other EMS providers are all scaling AI server operations for the Blackwell cycle.

Section VI

Direct Liquid Cooling, Rack-Scale Systems, and Product Differentiation

Supermicro’s strongest claim to differentiation centers on three capabilities: its in-house direct liquid cooling (DLC) technology, its modular rack-scale design architecture, and its speed-to-market with each new GPU platform generation.

The DLC-2 technology stack includes Supermicro-designed cold plates that remove up to 98% of heat directly from GPUs and CPUs, in-rack coolant distribution units (CDUs) supporting up to 250kW of cooling capacity, and cooling towers for closed-loop systems. The company claims this technology reduces data center power consumption by up to 40% and eliminates the need for compressor-based chilled water systems by operating at 45°C warm water.

For rack-scale systems, the DCBBS framework allows Supermicro to deliver pre-validated, factory-tested clusters — including 256-node AI Factory configurations with 2,048 GPUs — as ready-to-deploy packages. This is a meaningful operational advantage for customers racing to bring AI compute online.

The skeptic’s counterargument: liquid cooling technology is not proprietary in a defensible sense. Vertiv, CoolIT, and others offer competing solutions. Dell and HPE are rapidly scaling their own liquid cooling capabilities. And the core GPU-to-system integration process, while complex, does not create the kind of software-like switching costs that generate durable pricing power.

Section VII

Competitive Position: Dell, HPE, ODMs, and Hyperscaler In-House Builds

The AI server market is intensely competitive and getting more so. IDC data for Q4 2024 showed Supermicro with approximately 6.5% of global server revenue, virtually tied with Dell for the top position that quarter. For full-year 2025, Dell and Supermicro finished in a close tie at the top of the branded server market, each near 10% share, with ODM Direct commanding 53% of quarterly revenue — a striking indicator of where hyperscaler demand flows.

Dell projects $20 billion in AI server shipments for FY2026 and brings stronger enterprise relationships, a broader IT portfolio, and more robust services infrastructure. HPE emphasizes its AI Factory solutions and liquid cooling expertise. Lenovo posted 70% year-over-year server revenue growth in Q4 2024. And critically, EMS providers like Foxconn, Pegatron, and Sanmina are expanding their roles in the Blackwell cycle, competing directly for hyperscale and tier-2 cloud customers.

Supermicro’s competitive edge — speed to market, GPU supplier relationships, DLC capability — is real but not necessarily durable. The question is whether agility compounds or whether larger, better-capitalized competitors eventually close the gap.

Section VIII