The Chip Atlas

Who owns the world's most important technology. Who is catching up. Who is falling behind.

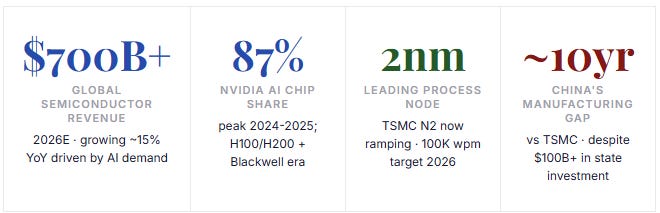

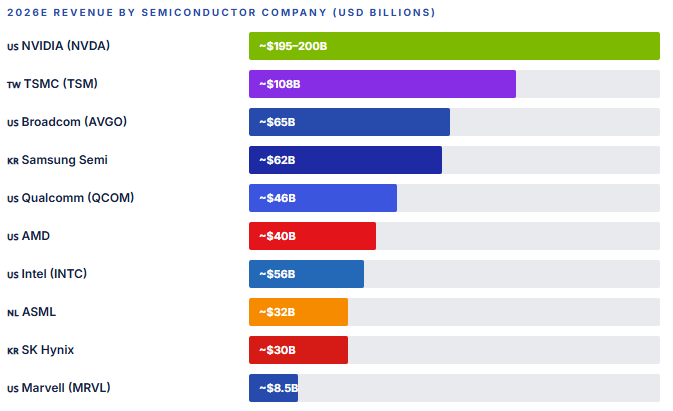

The semiconductor industry is the most strategically contested sector on earth. Every AI model, every smartphone, every fighter jet, every data center runs on silicon. We map the entire global landscape — from NVIDIA's near-monopoly on AI compute to Taiwan's existential manufacturing leverage, from AMD's comeback to China's decade-long struggle to build chips without Western help. We cover the leaders, the challengers, the dark horses, and the one Dutch company whose machines hold the entire thing together.

The Scale of the Industry

Where the World Stands in April 2026

Semiconductors are the oil of the 21st century — except more concentrated, more technically demanding, and more geopolitically explosive. No other manufactured product requires the same combination of physics mastery, supply chain precision, and capital intensity. A single cutting-edge TSMC fab costs $20+ billion to build and takes five years to reach full production. The machines inside it cost $200 million each and are made by one company, in the Netherlands, that has no meaningful competition. The chips those machines produce are designed in California, Taiwan, or South Korea, and are irreplaceable for virtually every advanced technology on earth.

This is not a normal market. It is a geopolitical stack. Understanding who is ahead, who is catching up, and what structural advantages are genuinely durable requires looking at every layer: design, manufacturing, memory, software, and equipment. We cover each one.

The Uncontested King

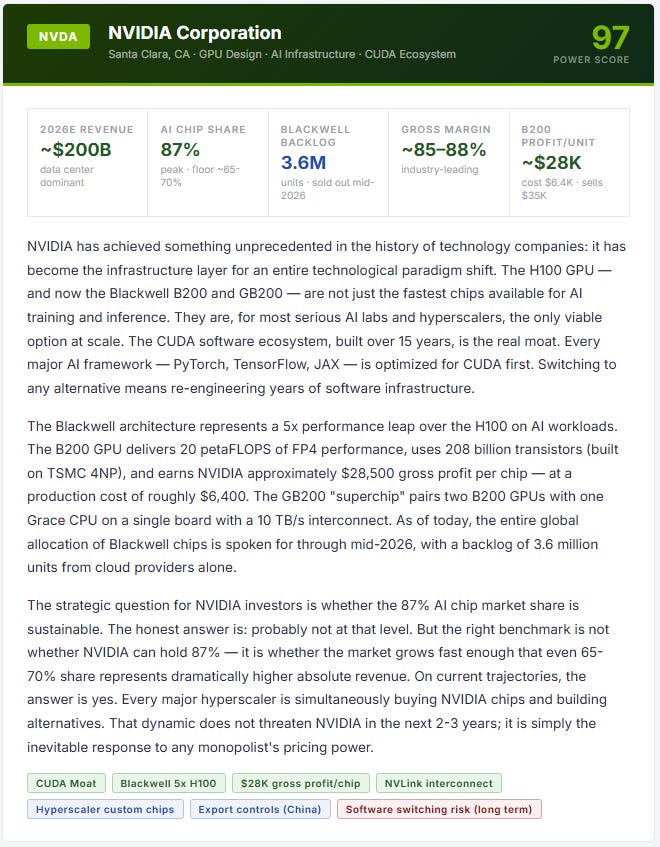

NVIDIA — The $3 Trillion Company That Owns AI Compute

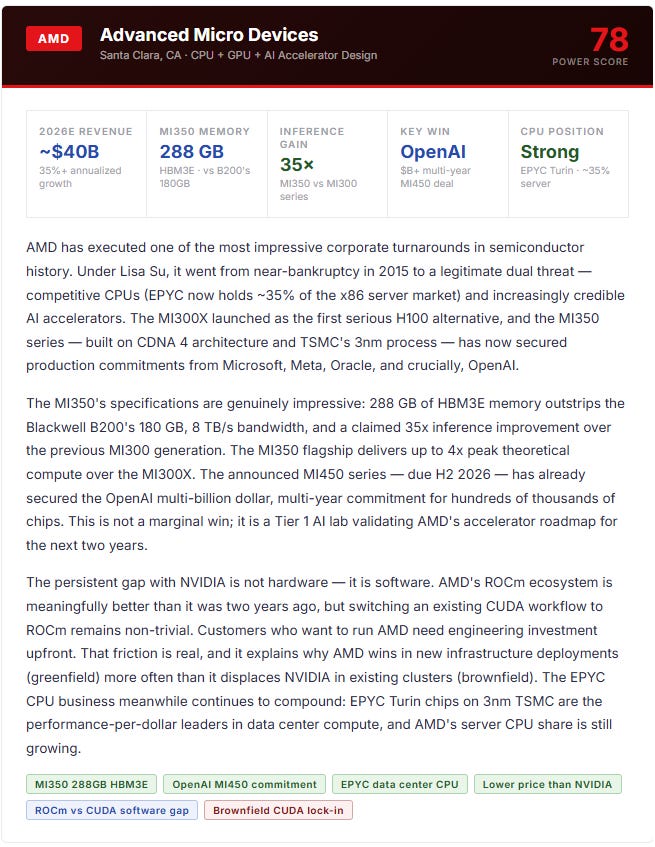

The Challenger

AMD — The Legitimate Threat That Keeps Getting Closer

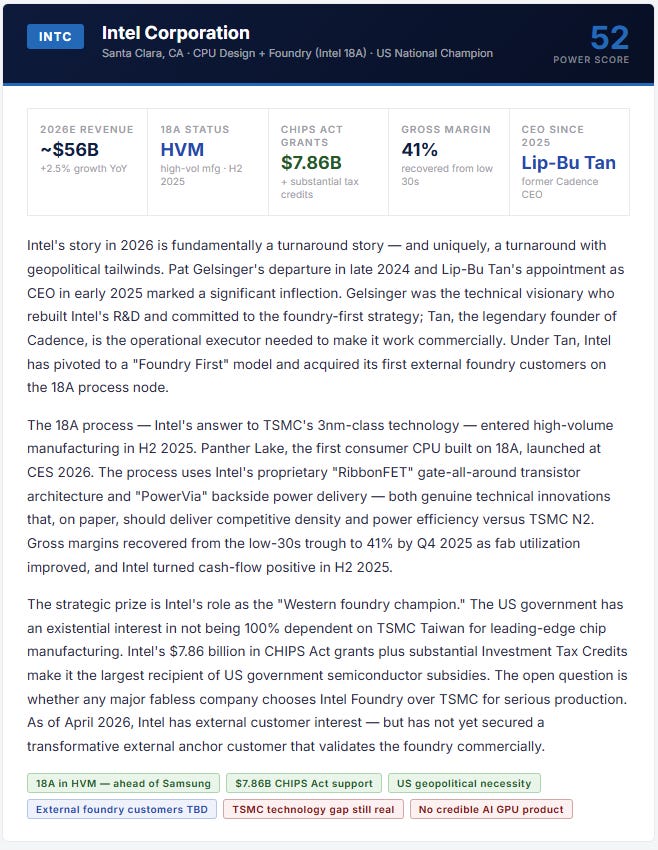

The Fallen Giant

Intel — Can the National Champion Finally Execute?

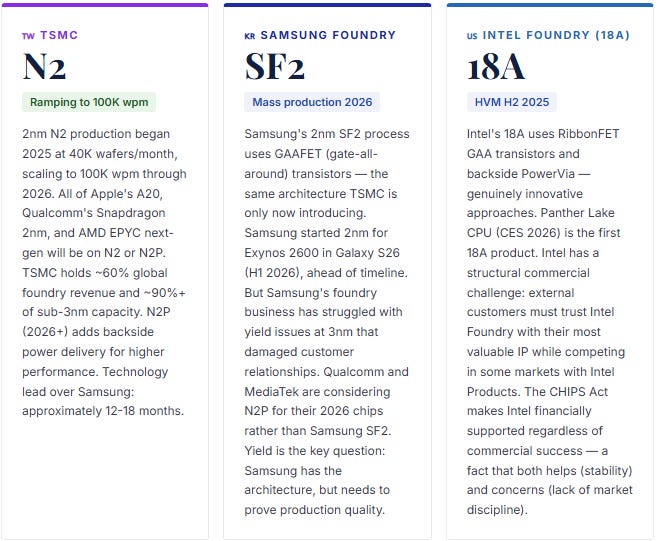

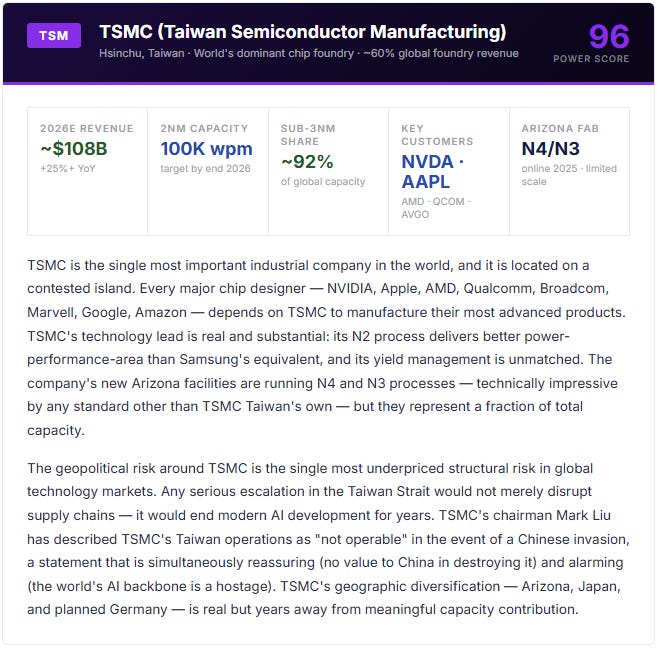

Manufacturing Layer

The Foundry Race — Who Makes the World’s Chips

The foundry industry is where geopolitical risk is most concentrated. Approximately 92% of the world’s most advanced semiconductor manufacturing (below 7nm) happens in Taiwan — a single island, roughly the size of Maryland, that sits 100 miles off the coast of mainland China. The implications of that concentration are so alarming that the US, EU, Japan, and South Korea have all launched emergency subsidy programs to diversify. None of those programs change the fundamental reality for 2026: TSMC Taiwan is where the leading chips are made.

The Mobile and Edge Battle

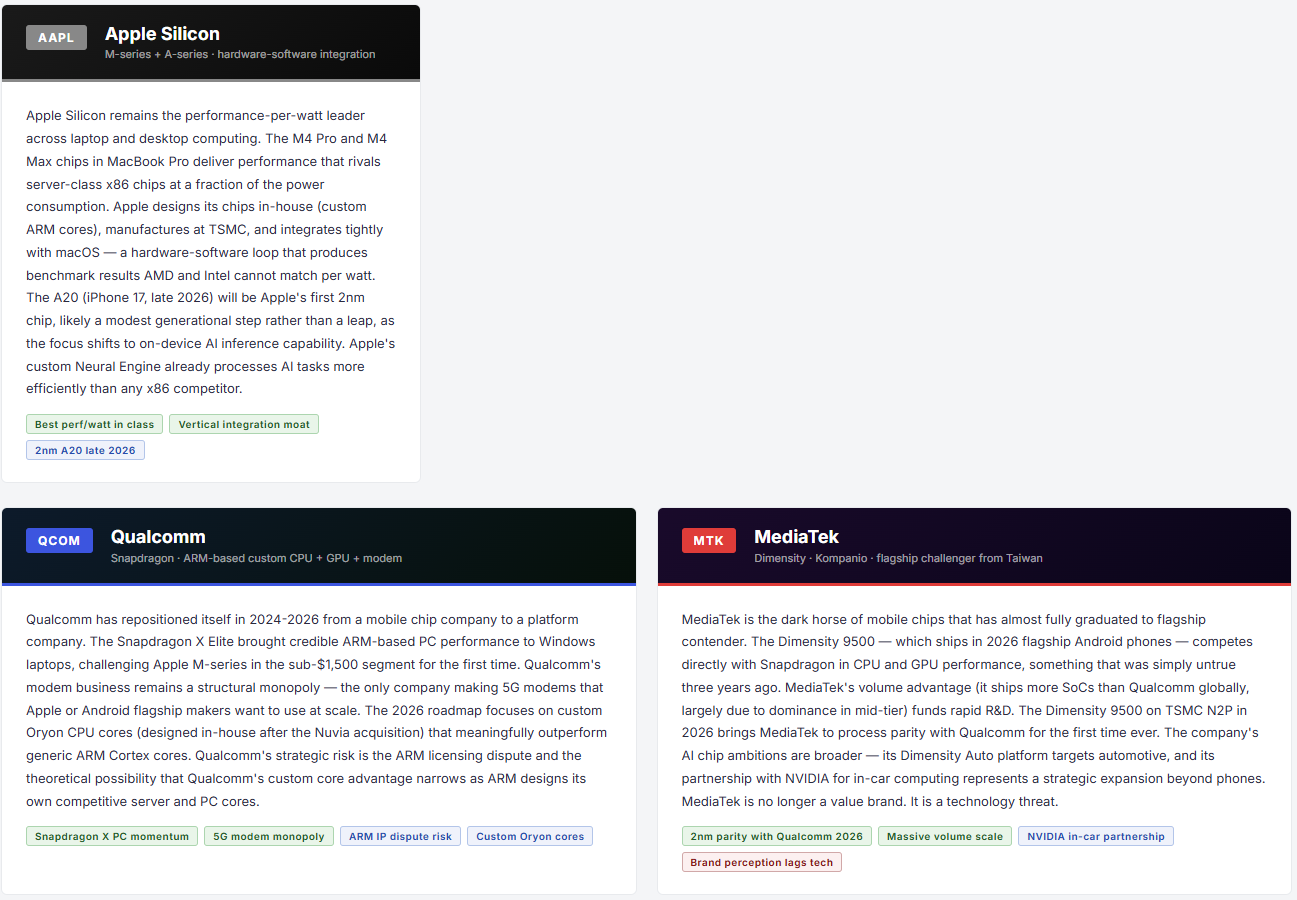

Qualcomm vs Apple Silicon vs MediaTek — The 2nm Convergence

The mobile chip market is undergoing its own inflection in 2026. For the first time, three companies — Apple, Qualcomm, and MediaTek — are simultaneously launching 2nm devices. Apple will use TSMC N2 for the A20 chip powering iPhone 17. Qualcomm’s Snapdragon 8 Elite successor uses TSMC N2P (the improved variant with backside power delivery). MediaTek’s next Dimensity flagship does the same. The node convergence has shifted competition from manufacturing prowess to architecture, software integration, and AI processing capability.

The Silent Threat to NVIDIA

Big Tech’s Custom Silicon — Google, Amazon, Microsoft, Meta

The most significant long-term risk to NVIDIA’s market share is not AMD. It is Google, Amazon, Microsoft, and Meta — four companies that collectively spend hundreds of billions of dollars per year on AI infrastructure, and all four of which have now built production-grade custom AI accelerators specifically designed to reduce their NVIDIA dependency. This is not experimentation. It is production at scale.

These are not marginal chips. Amazon’s Trainium3 is being used by Anthropic and OpenAI for real training workloads. Google has trained multiple generations of Gemini on TPUs. Microsoft’s Maia targets the inference bottleneck — where cost-per-token directly impacts Azure’s margin. Meta announced four generations of MTIA (300-500 series) in March 2026, with the v3 flagship targeting 25x compute gains over the original, built on TSMC N3 with RISC-V architecture.

The common thread is inference. Custom silicon wins most clearly in inference — serving AI models at scale where the same model runs millions of identical operations per second. Training remains more complex and NVIDIA’s CUDA advantage is most entrenched there. But inference is where AI spending is now shifting. As models mature and stabilize architecturally, the case for purpose-built inference chips (lower cost, lower power, same throughput for specific model families) becomes more compelling.

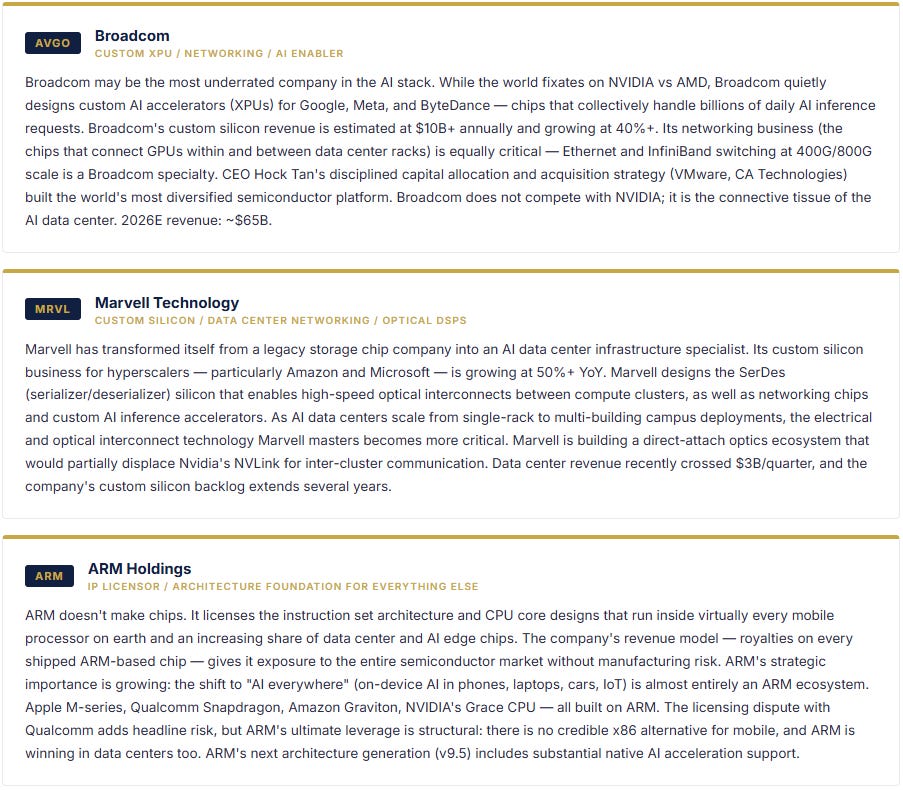

BROADCOM AND MARVELL: THE ENABLERS

The hyperscaler custom silicon build-out has created two massive winners that most retail investors overlook: Broadcom (AVGO) and Marvell (MRVL). Both companies design custom AI accelerators (XPUs) for hyperscalers who don’t want to do the full chip design themselves. Broadcom builds the Google TPU and chips for Meta and ByteDance; Marvell serves Amazon and Microsoft on networking and custom compute. Broadcom’s custom silicon revenue is estimated at $10B+ annually and growing at 40%+. Marvell’s data center revenue exceeded $3B in its most recent quarter. Both companies benefit from NVIDIA’s price power without competing against NVIDIA directly — a uniquely attractive position.